There's a pattern that plays out in businesses across every sector. A team deploys an AI tool with high expectations. Within weeks, people stop using it. Within months, the organisation concludes that "AI doesn't work for us."

But the AI wasn't the problem. The data was.

The Problem With Generic AI

Most commercial AI tools are built on large language models trained on publicly available internet data. They know a remarkable amount about a remarkable number of things. But they don't know your products. They don't know your processes. They don't know the terminology your team uses, the equipment models you maintain, or the procedures you follow.

When an engineer asks a generic AI tool how to troubleshoot a fault on a specific piece of equipment, the AI does what it's designed to do — it generates a plausible-sounding answer based on general knowledge. The answer might reference a different manufacturer's equipment. It might describe a procedure that doesn't match your safety protocols. It might use terminology that doesn't align with your internal systems.

The answer sounds right. But it's wrong for your context. And in operational environments, that gap between "sounds right" and "is right" can be dangerous.

What Actually Goes Wrong

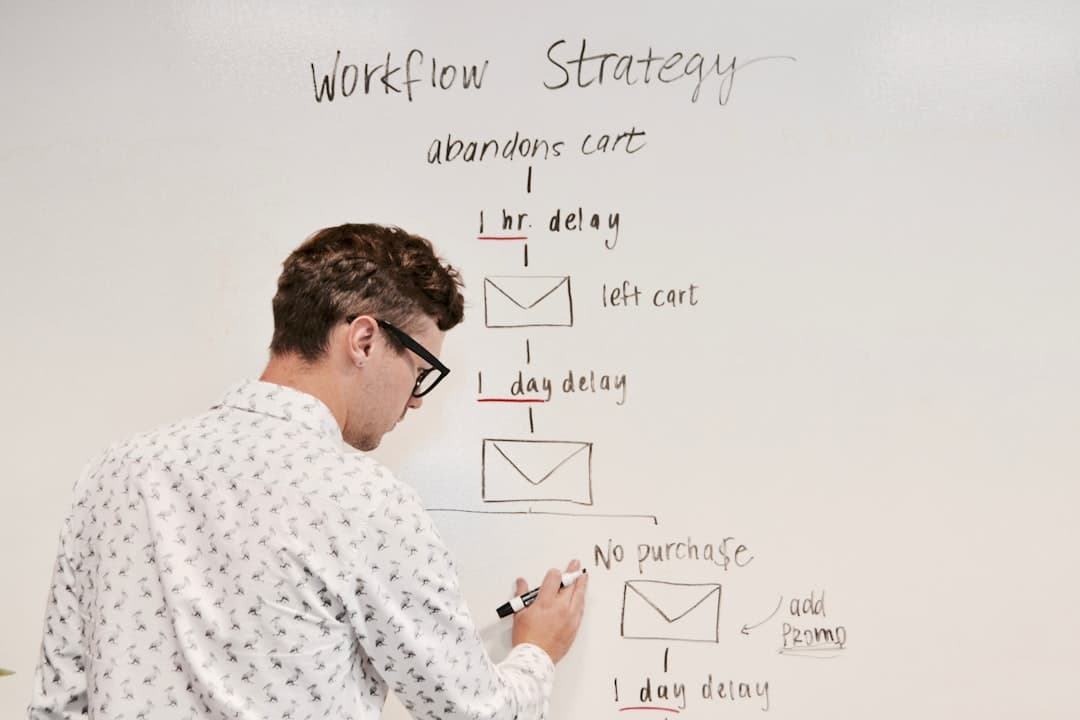

The failure mode is predictable and follows a consistent trajectory:

Phase 1: Initial enthusiasm. The tool is deployed. A few early adopters try it and get some useful general answers. Expectations are high.

Phase 2: Trust erosion. Staff start asking domain-specific questions — the ones they actually need help with. The answers are vague, generic, or subtly incorrect. A maintenance engineer gets a procedure that doesn't match the equipment. A technician gets a part number that doesn't exist in your system.

Phase 3: Abandonment. Word spreads quickly: "The AI gives wrong answers." Usage drops to near zero. The tool becomes shelfware.

Phase 4: Wrong conclusion. Leadership concludes that AI isn't mature enough for their industry, or that their business is "too specialised" for AI. The real issue — that the AI had no access to the organisation's actual knowledge — goes undiagnosed.

This pattern repeats across manufacturing, engineering, HVAC, chemical processing, and dozens of other sectors. The technology works. The implementation doesn't — because it was never connected to the data that matters.

Why Your Own Data Changes Everything

When an AI system is trained on your organisation's actual documentation, the dynamic shifts completely. Instead of generating answers from general knowledge, it retrieves and synthesises information from your specific sources.

Ask about troubleshooting a fault on unit 7, and the AI references the maintenance manual for that exact unit. Ask about the warranty process for a specific product line, and it walks through your actual procedure. Ask about safety protocols for a chemical handling process, and it cites your own COSHH assessments.

The answers become specific, accurate, and verifiable. Staff can check the sources. They can see where the information came from. Trust builds quickly because the AI is demonstrably working with the right material.

This isn't a marginal improvement. It's the difference between a tool nobody uses and a tool that becomes indispensable.

What "Your Data" Actually Includes

When we talk about training AI on your own data, businesses often assume they need to create new content from scratch. They rarely do. The knowledge already exists — it's just scattered, buried, or locked in formats that aren't easy to search.

The data sources that typically feed an effective AI system include:

- Technical manuals and equipment guides — manufacturer documentation, installation guides, service manuals

- Standard operating procedures (SOPs) — step-by-step processes for routine and non-routine tasks

- Troubleshooting procedures — fault trees, diagnostic workflows, known issue databases

- Safety protocols — risk assessments, COSHH data, permit-to-work procedures, method statements

- Training materials — onboarding documents, competency frameworks, assessment guides

- Engineering drawings and specifications — where relevant, extracted data from technical drawings

- Historical service records — past tickets, resolution notes, maintenance logs

- Expert interviews — recorded and transcribed conversations with experienced staff who carry knowledge that was never formally documented

Most organisations are surprised by how much usable material they already have. The challenge isn't creating content — it's structuring what exists so an AI system can use it effectively.

How Tarin Makes This Practical

Tarin's implementation process starts with your existing documentation. We ingest your manuals, SOPs, technical guides, and internal knowledge base — in whatever format they exist. PDFs, Word documents, spreadsheets, wiki pages, scanned documents — all of it.

The system structures this information, identifies relationships between topics, and builds a knowledge graph specific to your organisation. When your team asks a question, the AI searches this structured knowledge base, retrieves the most relevant information, and presents it conversationally — with source references so the answer can be verified.

There's no requirement to rewrite your documentation or create new content. The knowledge your business has accumulated over years becomes instantly accessible, searchable, and conversational.

And because the system learns from usage — tracking which questions get asked, which answers get accepted or corrected, and where gaps exist — it improves continuously. Your documentation becomes a living knowledge base rather than a static archive.

If you'd like to see how your existing documentation could power an AI agent for your team, request a demo.

Implementation

Implementation