AI Strategy

AI StrategyCustomising AI Models for Industry-Specific Applications

Different industries need different AI. Here's why custom models trained on your sector's terminology, regulations, and workflows outperform generic alternatives.

Read article →

Every conversation about AI in the workplace eventually hits the same nerve: "Will it replace us?"

It's a fair question. But it's the wrong framing. The most effective AI deployments don't replace people — they remove the drudgery that prevents people from doing their best work. Nowhere is this clearer than in first-line support.

Think about the questions your most experienced staff field every day. Where's the latest version of the maintenance schedule? What's the procedure for recalibrating sensor X? How do I log a warranty claim for unit Y?

These aren't complex questions. They have clear, documented answers. But they get asked dozens of times a week because finding the answer in a 400-page manual or buried SharePoint folder takes longer than walking over to someone who knows.

First-line AI support handles this layer — the repetitive, predictable volume. The same 20 questions asked 100 times. It doesn't attempt to replace the judgement calls, the troubleshooting of novel problems, or the nuanced decisions that require years of experience. It resolves the predictable stuff so your people can focus on the rest.

When senior engineers spend a quarter of their day answering basic questions from colleagues, two things suffer: their own productivity and their job satisfaction. Nobody with 15 years of domain expertise wants to be used as a human search engine.

First-line AI support changes this dynamic in several concrete ways:

This isn't theoretical. Organisations that implement first-line AI support consistently report that their teams welcome it — once they see it handles the noise without touching the work they care about.

The key to effective first-line AI support is knowing its own limits. A well-built system operates on a simple principle: answer what you can confidently, and escalate what you can't — with full context.

Here's how the escalation model works in practice:

Step 1: Confident resolution. The AI receives a query, searches the organisation's documentation, and provides a clear answer with source references. If the confidence is high and the answer is well-supported, the query is resolved.

Step 2: Uncertainty flagging. When the AI encounters ambiguity — multiple possible answers, incomplete documentation, or a question outside its training scope — it flags this transparently. It doesn't guess. It doesn't fabricate.

Step 3: Contextual escalation. The query is passed to a human expert, but not as a cold handoff. The AI includes what was asked, what it found, what it was uncertain about, and any relevant documentation it identified. The human starts with context, not from scratch.

Step 4: Learning from resolution. When the human resolves the escalated query, that resolution feeds back into the system. The AI learns from the correction and handles similar queries more effectively next time.

Over time, the ratio shifts. More queries resolve at the AI layer. Fewer reach human experts. But the humans remain in the loop — reviewing, correcting, and teaching the system.

The most successful AI support deployments share a common trait: they position the AI as a gatekeeper, not a decision-maker.

The AI doesn't approve purchase orders. It doesn't override safety procedures. It doesn't make calls that require professional judgement. It handles information retrieval, routing, and first-pass answers. The final authority stays with your people.

This distinction matters enormously for adoption. When staff see that the AI handles the basics competently and still routes the complex stuff to them, resistance drops. They stop seeing it as a threat and start seeing it as a tool — one that makes their day measurably better.

The organisations that struggle with AI adoption are almost always the ones that tried to automate too much too fast. Start with the first line. Build trust. Expand from there.

Tarin is built around this first-line principle from the ground up. Rather than relying on generic AI models, Tarin ingests your organisation's own documentation — manuals, SOPs, troubleshooting guides, internal wikis — and trains specifically on your knowledge base.

The result is an AI agent that answers routine questions accurately, using your terminology and referencing your actual procedures. When it encounters something beyond its scope, it escalates with full context to the right person. And every escalation makes the system smarter.

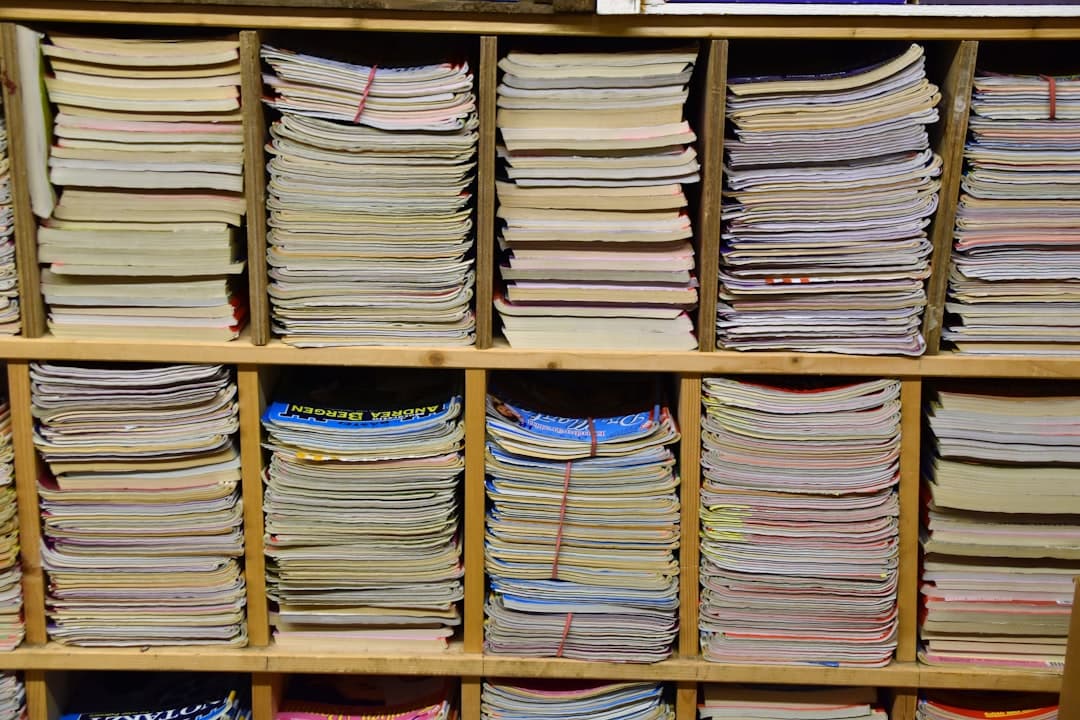

Your team's expertise isn't replaced. It's amplified. The routine queries are handled. The complex work gets the attention it deserves. And your institutional knowledge becomes a living, accessible resource rather than a collection of documents gathering dust.

If you'd like to see how first-line AI support would work with your documentation, request a demo.

Find out how AI trained on your documentation can support your team. Request a personalised demo.

Request a Demo AI Strategy

AI StrategyDifferent industries need different AI. Here's why custom models trained on your sector's terminology, regulations, and workflows outperform generic alternatives.

Read article → Implementation

ImplementationMost AI failures aren't technology problems — they're data problems. When AI doesn't know your business, it gives plausible-sounding answers that are completely wrong.

Read article → Industry

IndustryOff-the-shelf AI gives field engineers shallow, generic answers. Custom AI trained on your technical library delivers equipment-specific guidance that actually helps.

Read article →