There's a persistent myth that AI works best when you remove humans from the equation. Train the model, deploy it, and let it run. The less human involvement, the more efficient the system.

In practice, the opposite is true — especially in domain-specific applications where accuracy isn't just nice to have, it's essential.

What Human-in-the-Loop Actually Means

Human-in-the-loop (HITL) is a model where humans remain actively involved in an AI system's training, operation, and improvement. Rather than treating deployment as the finish line, HITL treats it as the starting point of an ongoing collaboration between human expertise and machine capability.

In a HITL system, humans contribute at every stage:

- Before deployment: Curating training data, validating initial outputs, identifying gaps in coverage, and ensuring the system's knowledge base is accurate and complete.

- During operation: Reviewing AI responses that fall below confidence thresholds, handling escalated queries, and monitoring for errors or drift.

- After each interaction: Providing feedback on response quality, correcting inaccuracies, and supplying additional context that improves future responses.

The human doesn't do the AI's job. The AI doesn't replace the human's judgement. Each contributes what they're best at, and the system improves as a result.

Why Human Oversight Matters for Accuracy

AI models are remarkably capable at pattern matching and information retrieval. But they have a fundamental limitation: they can't assess the real-world validity of their own outputs. An AI system doesn't know whether its answer is correct — it knows whether its answer is statistically consistent with its training data.

In general-knowledge applications, this limitation is manageable. In domain-specific environments — manufacturing, engineering, HVAC, chemical processing — it's critical.

Consider a scenario where an AI system provides a troubleshooting procedure for a piece of industrial equipment. The procedure is syntactically correct and follows a logical structure. But a step is missing — a safety isolation step that's specific to the way the equipment is configured on your site. The AI doesn't know it's missing because that configuration detail wasn't in the training data.

A human expert catches this immediately. They've worked with the equipment. They know the site-specific configurations. They can identify the gap, correct the response, and ensure the correction is fed back into the system so it doesn't happen again.

This kind of expert validation is irreplaceable. No amount of training data substitutes for the contextual knowledge that experienced professionals bring to the review process.

How the Feedback Loop Works

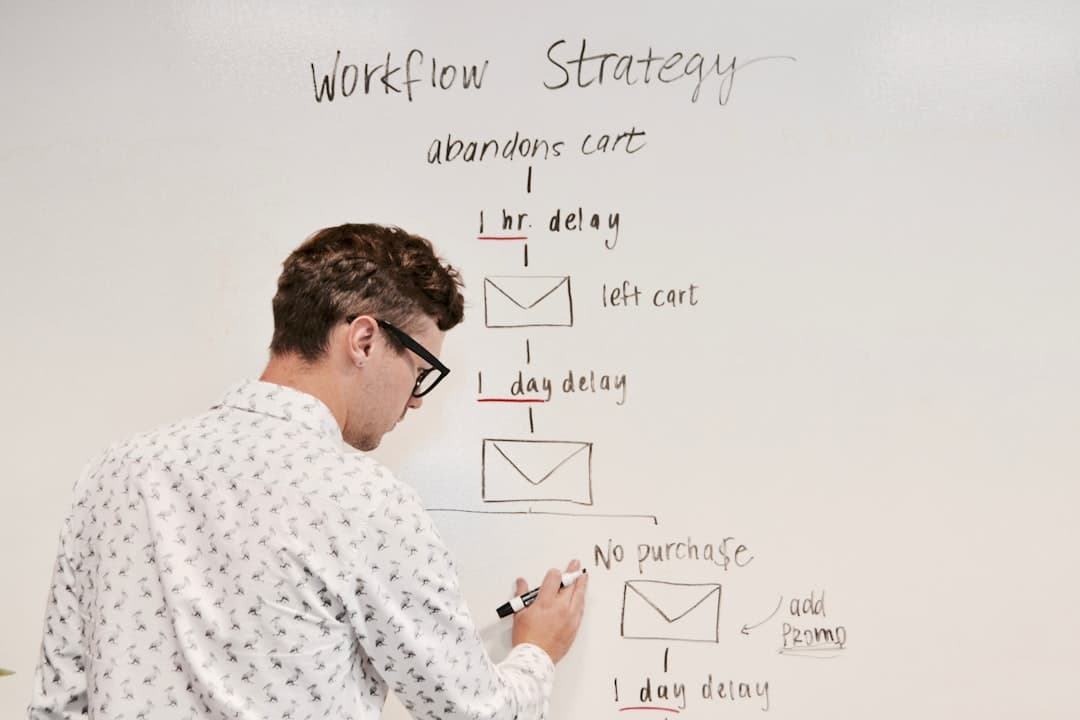

The HITL feedback loop is a structured process that turns human expertise into systematic AI improvement. Here's how it operates:

Stage 1: Initial Knowledge Setup

Before the AI goes live, domain experts help curate and validate the training data. They review the documentation being ingested, flag outdated content, identify gaps, and provide context that helps the system understand relationships between topics.

This isn't a one-time data dump. It's a guided process where human expertise shapes the foundation the AI builds on.

Stage 2: Monitored Operation

Once deployed, the AI operates with built-in confidence scoring. When it's confident in an answer — the documentation is clear, the question is unambiguous, the source material is solid — it responds directly.

When confidence is lower — the question is ambiguous, multiple sources conflict, or the topic is at the edge of the training data — the system flags the response for human review before or after delivery, depending on the stakes.

Stage 3: Expert Review and Correction

Human reviewers assess flagged responses. They confirm accurate answers, correct inaccurate ones, and add context where the AI's response was technically correct but incomplete. Each review decision is logged and structured.

Stage 4: Model Improvement

Corrections and confirmations feed back into the system. The AI's understanding is updated. Topics that generated frequent corrections get additional attention. Documentation gaps identified through the review process are flagged for content teams.

The Cycle Repeats

Over time, the system becomes more accurate, more confident, and more aligned with the organisation's actual knowledge. The volume of escalations decreases. The quality of first-pass answers increases. But the human review loop never disappears — it simply shifts focus to edge cases and new topics.

Real Business Benefits

Organisations that implement HITL AI training see measurable advantages over deploy-and-forget approaches:

- Higher accuracy. Continuous human correction eliminates the error propagation that undermines unsupervised systems. Accuracy improves steadily over time rather than degrading.

- Faster adaptation. When processes change — new equipment, updated procedures, revised regulations — human reviewers flag the changes and the system adapts within days, not months.

- Greater trust. Staff who participate in the review process see firsthand that the AI is responsive to their feedback. This builds genuine trust rather than imposed adoption.

- Team ownership. When your people shape the AI's knowledge, they develop a sense of ownership over the system. It becomes "our tool" rather than "that thing management bought."

- Reduced risk. In industries where wrong answers have real consequences, human oversight provides a safety net that pure automation can't match.

How Tarin Implements Human-in-the-Loop

Tarin's architecture is built around the HITL principle. Every deployment includes:

- Confidence-scored responses that transparently indicate how certain the AI is about each answer.

- Built-in escalation pathways that route uncertain queries to designated human reviewers with full context.

- Review workflows that make it easy for domain experts to confirm, correct, or augment AI responses.

- Continuous learning from every human interaction, systematically improving the model over time.

Your team's expertise isn't sidelined — it's the engine that makes the AI smarter. Every correction, every review, every piece of feedback makes the system more valuable.

If you'd like to see how human-in-the-loop AI would work within your team, request a demo.

Implementation

Implementation